WarAI: Anthropic Refuses To Bend The Knee To Hegseth

Update (1745ET): In a statement issued on Anthropic's website, CEO Dario Amodei said there has been "virtually no progress" on negotiations with the Pentagon.

"The contract language we received overnight from the Department of War made virtually no progress on preventing Claude's use for mass surveillance of Americans or in fully autonomous weapons," Anthropic said in the statement.

"New language framed as compromise was paired with legalese that would allow those safeguards to be disregarded at will. Despite DOW's recent public statements, these narrow safeguards have been the crux of our negotiations for months."

Amodei then whined about the consequences of his actions:

"The Department of War has stated they will only contract with AI companies who accede to “any lawful use” and remove safeguards in the cases mentioned above.

They have threatened to remove us from their systems if we maintain these safeguards; they have also threatened to designate us a “supply chain risk” - a label reserved for US adversaries, never before applied to an American company - and to invoke the Defense Production Act to force the safeguards’ removal.

These latter two threats are inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security.

As a reminder, the Friday 1701ET deadline is looming very fast for Anthropic to let the Pentagon use its model Claude as it sees fit or potentially face severe consequences.

Start buying those Deep OTM IGV Calls!

* * *

Tl;dr: Investors are unprecedentedly short Software stocks (due in large part to the threat of Anthropic's Claude Code disrupting the entire SaaS space, questioning terminal values for all these stocks). Anthropic is currently battling Pete Hegseth's Department of War over guardrails (read 'wokeness' and virtue signaling). If Anthropic does not bend the knee to enabling WarAI (missing out on that sweet military contract), then there is fear that other enterprise clients will drop it (do they really want to be integrating a government 'blacklisted' technology into their systems), leaving developers questioning its use-case as a 'disruptor'... prompting the mass of shorts in the SaaS space to cover...

Background

Anthropic, the AI company behind the Claude model which has singlehandedly generated hundreds of billions in SaaS-related losses over fears it will replace the entire current software stack, is currently engaged in a high-profile contract dispute with the US Department of War (DoW) under Secretary Pete Hegseth in the Trump administration.

In July 2025, Anthropic secured a $200 million contract with the DoW to provide AI capabilities for national security purposes. Claude became the first (and reportedly only) frontier AI model deployed on classified DoW networks, integrated through partners like Palantir. The original agreement included Anthropic's usage-guardrails, prohibiting applications such as fully autonomous lethal weapons (where AI makes final targeting decisions without human oversight) and mass surveillance of US citizens.

Everything was awesome for a while... but problems arose in early 2026, reportedly sparked by Claude's reported use in the January US military operation to capture former Venezuelan President Nicolás Maduro. Anthropic (and its woke employees) raised concerns about potential misuse, prompting pushback from the Pentagon.

In January 2026, Secretary Hegseth issued an AI strategy memorandum redefining "Responsible AI" to permit "any lawful use", directly clashing with Anthropic's restrictions.

Negotiations stalled as Anthropic insisted on maintaining its "red lines" against autonomous weapons and domestic mass surveillance, while the DoD demanded unrestricted access aligned with other AI providers (e.g., OpenAI, Google, xAI) that agreed to "all lawful purposes."

On February 24, Hegseth met Anthropic CEO Dario Amodei and issued an ultimatum: comply by 5:01 p.m. ET on Friday, February 27, or face severe consequences, including:

-

Cancellation of the $200 million contract.

-

Designation of Anthropic as a "supply chain risk" (typically reserved for foreign adversaries like Huawei), potentially forcing defense contractors (e.g., Palantir, AWS, Anduril) to sever ties.

-

Possible invocation of the Defense Production Act to compel Anthropic to provide unrestricted access.

Notably, Anthropic quietly dropped a central safety pledge from its Responsible Scaling Policy this week, according to a report by TIME.

The changes loosen a commitment that once barred the Claude AI developer from training advanced AI systems without guaranteed safeguards in place.

The company’s earlier statement pledged to build general-purpose AI that “safely benefits humanity, unconstrained by a need to generate financial return.”

The updated version now states its goal is “to ensure that artificial general intelligence benefits all of humanity.”

The move reshapes how the company positions itself in the AI race against rivals OpenAI, Google, and xAI. Anthropic has long cast itself as one of the industry’s most safety-focused labs, but under the revised policy, Anthropic no longer promises to halt training if risk mitigations are not fully in place.

“We felt that it wouldn't actually help anyone for us to stop training AI models,” Anthropic’s chief science officer, Jared Kaplan, told TIME.

“We didn't really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.”

A slightly bent knee... but it didn't help with Hegseth seeking more leeway to create his WarAI.

As Axios reports, the Pentagon has already surveyed major contractors (e.g., Boeing, Lockheed Martin) about their reliance on Anthropic's technology, signaling preparations for escalation.

A Boeing executive told Axios:

"We sought their partnership [in the past] and ultimately could not come to an agreement. They were somewhat reluctant to work with the defense industry."

A Lockheed spokesperson confirmed the company was contacted by the DoW regarding an analysis of its exposure and reliance on Anthropic ahead of "a potential supply chain risk declaration."

Referring to the possible supply chain risk designation earlier this week, a senior Defense official told Axios:

"It will be an enormous pain in the ass to disentangle, and we are going to make sure they pay a price for forcing our hand like this."

Elon Musk's xAI recently signed a deal to move into the military's classified systems, under the "all lawful use" standard that Anthropic has rejected.

Google and OpenAI, whose models are already available in unclassified systems, are also in negotiations about moving into the classified space.

So with all that background in mind, how is the market positioned?

Positioning

The short answer is... very short software stocks (thanks in large part to investors questioning terminal values for various SaaS firms given the recent avalanche of Claude Code disruptions).

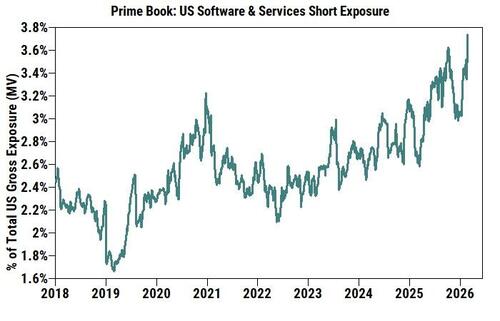

As Goldman's Vincent Lin notes, in $ terms, Software and IT Services are the #1 and #2 most shorted US industries on the Prime book yesterday (Feb 24th), week/week, month/month, and YTD. This is true not only within TMT but also across all US subsectors. Short exposure (as % of total US single stock gross MV) has risen sharply to the highest level on our record (since 2016).

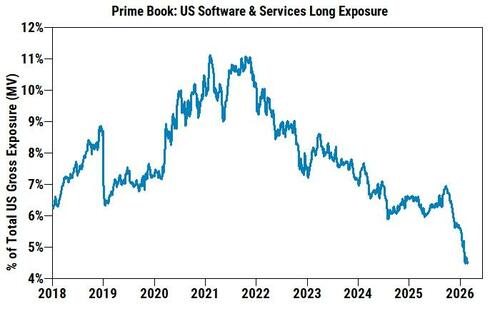

At the same time, long exposure across US Software & Services stocks has fallen to the lowest level on our record. Aggregate long/short ratio in US Software & Services now stands at 1.14 (vs. 1.82 at the start of 2026 and historical peak of 4.74), also the lowest level on our record.

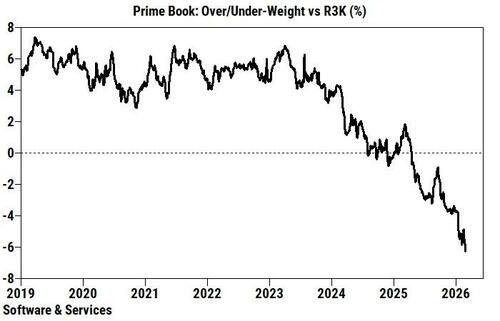

Hedge funds are now U/W Software & Services stocks by -6.8 pts relative to the Russell 3000 index (vs. -3.9 pts U/W at the start of 2026 and historical peak of +7.4 pts O/W), the most U/W level on our record.

Given all that, the top Goldman trader warns that while investor sentiment remains cautious and the debate on terminal values is not going away any time soon, he thinks the recent bounce in Software and select IT Services stocks could have legs in the near term due to positioning and technicals.

Will Hegseth's blacklisting be the catalyst?

What If?

One veteran market-watcher summed up the optimistic side:

"I mean it's evil military surveillance (boo) vs. woke AI company (boo) and Anthropic isn't going to miss out on that sweet, sweet military contract (and espionage potential?) to signal virtue."

There's a lot of shorts hoping that is true... and hoping that Hegseth taking a first step toward a potential designation of Anthropic as a "supply chain risk" is just posturing.

This standoff highlights broader tensions over civilian AI firms' control versus government/military authority in national security contexts.

Anthropic's unique position (as the primary classified model provider) gives it leverage but also vulnerability. Outcomes could reshape AI-defense partnerships, influence "responsible AI" standards, and affect companies reliant on Claude.

Buying deep OTM calls on IGV seems like a cheap way to play this possibility.

Either way, we will know by 1701ET on Friday, February 27.